Hardware Benchmarking Topic HPC on CPU vs GPU

Introduction

TUFLOW HPC (Heavily Parallelised Compute) has the ability to run on both CPU and Nvidia CUDA compatible GPU devices. This page discusses and compares simulation speed using both sets of computer hardware. As it's name suggests, TUFLOW HPC has been parallelised to enable simulation execution using multiple cores. This code architecture has been implemented to increase simulation speed.

Both CPU and GPU typically have multiple cores, however, GPU devices typically have a significantly larger number. For example a i7-8700 Intel CPU has 6 CPU cores (running at up to 4.7GHz). By contrast a GeForce GTX 1080ti has a total of 3,584 CUDA cores (running at up to 1.58 GHz). At the time of writing both the i7-8700k and GTX 1080ti are high end desktop hardware components. In a one-for-one comparison CPU cores are typically faster than GPU cores. The shear number of CUDA cores however typically mean simulation using GPU hardware will be faster than CPU.

Computation Speed

The speed at which TUFLOW HPC can solve depends on more than just the number of cores and processor speed. It includes things such as the instruction set architecture, microarchitecture, precision of computations, the TUFLOW model design and size (number of cells). For this reason, rather than discussing hardware components generally hardware benchmarks specific to TUFLOW provide the best indication of the relative performance of systems.

See also:

- Floating Point Operations per Second (FLOPS) on wikipedia.

- TUFLOW Benchmark Model Results

- Page 24-25 of Rapid and Accurate Stormwater Drainage Assessments Using GPU Technology (IECA-SQ Conference - Brisbane, Australia)

Test Case

The benchmark model used for this testing is based on a FMA Challenge Model 2 issued prior to the 2012 Flood Managers Association (FMA) Conference in Sacramento, USA. The model includes a coastal floodplain with two ocean outlets and simulates a flood over a 72 hour period. It uses a 20m cell resolution. This translates to 182,000 cells overall, of which, approximately 115,000 cells are wet at the peak of the flood.

Refer to the FMA Challenge Model 2 wiki page for a full description of the model.

Results

The simulations were conducted on a computer with the following hardware:

- CPU: Intel(R) Core(TM) i7-5960X CPU @ 3.00GHz processor. This provides access to 8 physical CPU cores. Hyper-threading was disabled.

- GPU: NVIDIA GeForce GTX 1080 Ti GPU card (3584 CUDA cores).

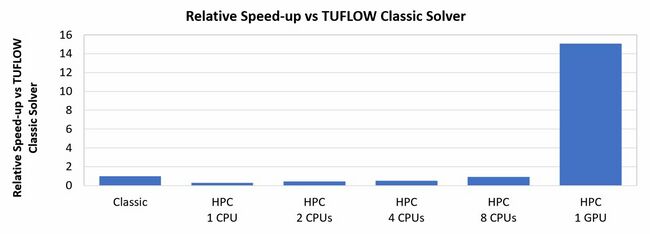

The table below presents runtimes for the same model run using TUFLOW Classic and TUFLOW HPC on CPU. These TUFLOW HPC simulations were tested for a range of CPU core multiples (from one through to eight). The TUFLOW HPC simulation was also run using GPU hardware.

| Simulation Runtime (min) | ||||||

|---|---|---|---|---|---|---|

| Solver | TUFLOW Classic | TUFLOW HPC | ||||

| Hardware | 1 CPU | 1 CPU | 2 CPUs | 4 CPUs | 8 CPUs | 1 GPU |

| Simulation Runtime | 97.9 | 339.8 | 226.4 | 188.0 | 106.68 | 6.5 |

| Simulation Speed-up (relative to TUFLOW Classic) | N/A | 0.29 | 0.43 | 0.52 | 0.91 | 15.06 |

Discussions

TUFLOW Classic vs TUFLOW HPC on CPU

The results show the runtime of TUFLOW Classic is much faster than TUFLOW HPC when both are run using a single (1) CPU core.

TUFLOW Classic uses an implicit solution scheme. Implicit schemes are inefficient to parallelise. As such, TUFLOW Classic can only run on a single CPU. TUFLOW HPC uses an explicit solver. Explicit solver are well suited to code parallelisation. Computationally, implicit schemes can achieve a stable solution at a larger time step comparable to explicit schemes. For this benchmark test the model ran using a 6 second timestep in TUFLOW Classic (implicit), while the adaptive timestep used by TUFLOW HPC (explicit) ranged from 1.7 to 2.3 seconds. The larger timestep used by TUFLOW Classic helps it run faster than TUFLOW HPC if both are limited to using a single CPU.

As expected, TUFLOW HPC simulations run faster as the number of CPU cores increase. For this benchmark test 8 CPU cores were necessary for TUFLOW HPC to achieve the same speed as a single CPU using TUFLOW Classic. Testing across a large range of models has shown this result trend typically varies from 6 to 10 CPU depending on the features included in a model.

This section does not consider TUFLOW HPC simulation speed with GPU acceleration. Please refer to the paragraph below for discussion relating to GPU.

TUFLOW HPC on CPU vs GPU

Even through one CPU core is typically faster than one GPU CUDA core, the runtime of the HPC solver on i7-5960X using 8 CPU cores is much slower than that on the NVIDIA GeForce GTX 1080 Ti GPU card using 3584 CUDA cores.

This testing highlights how GPU hardware has clear advantage over CPU for parallelised computing using TUFLOW HPC.

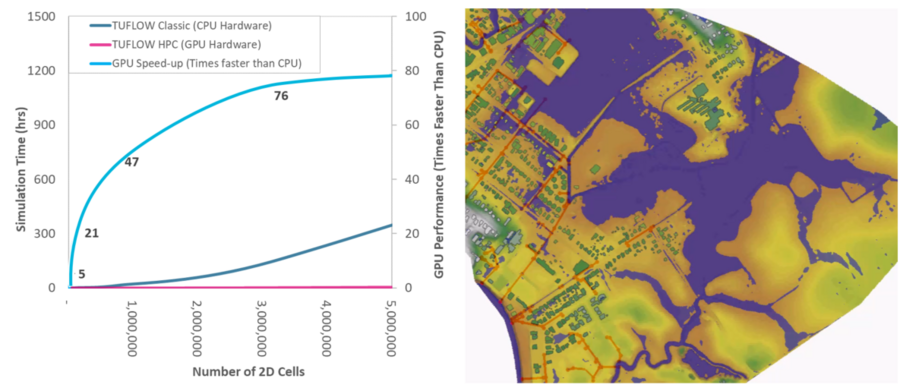

TUFLOW HPC GPU Speed-up vs Model Size

TUFLOW HPC using GPU hardware is approximately 15 times faster than TUFLOW Classic using CPU in this example. This speed-up ratio is not directly transferable to all situations. Numerous model features influence the speed up ratio. One of the most significant of these is model size (in terms of number of cells). Chris Huxley's 2017 IECA-SQ Conference presentation highlights the relationship between model size and the GPU speed-up ratio: (https://www.tuflow.com/Download/Presentations/2017/Huxley2017_IECA-SQ%20Conference_Brisbane_pdf.pdf)

The research findings are summarised in the figure and table below:

| Model Size

(Wet Cell Count) |

TUFLOW Classic Simulation Time

(hr) |

TUFLOW HPC (GPU) Simulation Time

(hr) |

GPU Speed-up ratio

(times faster than Classic- CPU) | ||||

|---|---|---|---|---|---|---|---|

| 7,500 | 0:12 | 0:03 | 4 | ||||

| 31,000 | 0:15 | 0:03 | 5 | ||||

| 125,000 | 1:32 | 0:05 | 21 | ||||

| 7,500,000 | 15:19 | 0:20 | 47 | ||||

| 3,100,000 | 146:00 | 1:55 | 76 | ||||

| 12,500,000 | 1152:00 | 18:30 | 78 | ||||

The research findings highlight how GPU to CPU speed-up increases with model size (computation cell count).

This relationship has been attributed to the architecture of the TUFLOW HPC code. TUFLOW HPC is parallelised using domain decomposition. It's domain is split into smaller tiles and passed to different CUDA cores on a GPU card for the hydrodynamic computations. Information is recombined at regular intervals to pass data between the CUDA cores and maintain result continuity. Because of this architecture, there are two key tasks which contribute a model's simulation time, the hydrodynamic computations and the communication overhead associated with passing information between the CUDA cores. In smaller models the communication overhead dominates the overall simulation time. In larger models the hydrodynamic computations dominate. This subtle difference explains the increase in GPU speed-up ratio as model size increases.

Click here to return to the Hardware Benchmarking Page.